If you’re one of the 11.6M movie goers who has seen the latest Top Gun movie – you may have done a double take after hearing Val Kilmer briefly “speak” at the end of Iceman and Maverick’s highly anticipated reunion. In real life, Kilmer lost the ability to speak after undergoing cancer treatment in 2014. Kilmer partnered with Sonantic, a speech AI company, to build a custom text-to-speech model that was tuned to sound like Val Kilmer using hours of archival footage supplied by Kilmer. Vidcast video editing

Oh, you’re a Star Wars fan?

Lucasfilm took a similar approach to bring Luke Skywalker back to life in The Mandalorian and The Book of Boba Fett. The fact that synthesized speech is being used in blockbuster films like Top Gun and the Star Wars series underscores how far the underlying technology has come in the past few years, and how accurate and life-like synthesized speech can now sound.

Your first thought after watching one of these movies likely wasn’t how this technology could impact your daily life. Leave that to us.

AI and video editing at Webex

We have an experienced team of engineering experts in AI & ML – the team that built the speech-to-text capabilities that power closed captions for Webex Meetings. The next horizon this team will ship is AI editing of videos. This will leverage custom text-to-speech models generated in the creator’s voice.

A Vidcast refresher

Vidcast is an asynchronous video messaging application we launched last August that extends the value of the Webex Suite to more use cases. Over the past ten months, Vidcast has seen triple-digit growth quarter over quarter. The Vidcast team has also launched 35+ new features, garnering raving fans along the way. As companies around the world, including Cisco, increasingly embrace hybrid work, it’s no surprise asynchronous video is seeing explosive growth, due in part to its ability to combat meeting fatigue.

Very quickly after launching Vidcast, we committed to a strong focus on our enterprise creators. They’re the power users of the platform, driving viral adoption of viewers who also become future creators. Early on, based on user interviews, we found it would take on average 3.5 takes to get a creator comfortable with sharing a video in a social feed or in a sales context. This led us to fast-track features that would help a creator “get it on the first take.” Obsessing over the editing experience has led to widespread, enduring adoption.

What do Top Gun and Star Wars have to do with Vidcast?

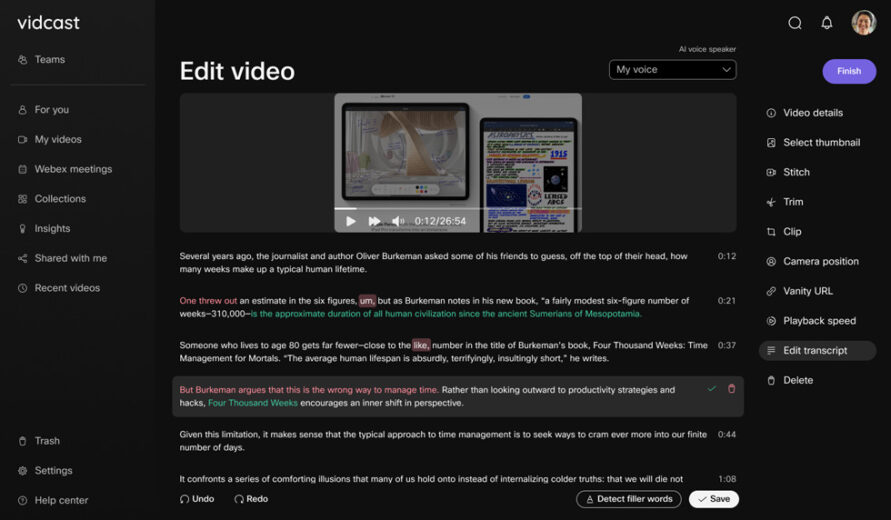

The team has developed a transcript editing tab within the Vidcast “creator panel.” From here, a creator will not only be able to easily edit the text for the transcript (for example, edit the spelling of a name or acronym), they can also use the transcript to quickly delete filler words (for example, um, ah, like, etc.), phrases or entire sentences, and automatically clip the video to align with the updated transcript.

Additionally, Cisco Live attendees will be granted a special technology preview. We’ll showcase the exciting capabilities of a working prototype that will allow creators to make transcript insertions. Using custom text-to-speech models, tuned to the creator’s own voice, we’ll also show how creators can insert synthesized audio to match! Using previously recorded Vidcasts, we use transfer learning to tune a text-to-speech model to sound like the speaker from the training data. The model continues to improve over time as the user creates more Vidcast content. This will be a gamechanger in reducing the time creators spend on additional takes in order to get a final video.

And if you forget a key point in your talk track, you’ll be able to simply add the sentence to your transcript and Vidcast will synthesize the audio for you.

We’ve demoed the feature to users in sales roles, and the feedback has been very positive:

“For anything I’m sharing with customers directly or posting to channels like LinkedIn, I want the content to be nearly perfect – and it can take me several takes to get everything just right. AI-enabled editing tools that would make it easy to remove a phrase or sentence and clip the video with the right alignment (so the end result is smooth and polished) would reduce the number of takes, and be a massive time saver!”

—Ashley Fareno

Easier pitches and demos with Vidcast

Another intriguing use case allows sales reps to quickly and easily customize a pitch or demo video. Using AI editing, they could add a personal intro to the beginning of their video, or even callout specific features during their demo that address a particular customer’s pain point, without having to re-record a version of the demo for every customer.

Intelligent discovery

In addition to AI editing, we have another exciting application of AI: intelligent discovery. Our team has been working on including intelligent discovery into a Vidcast homepage, which will include a feed of videos “recommended for you.”

This will be based on the content and creators users watch, what’s trending in their organization, and what specific teams they’re part of. After taking the time and care to create polished content, creators want their content to be seen and consumed. This new homepage will enable discoverability of new content and drive-up views for power creators, as well as help viewers discover trending and relevant Vidcast content every time they log into the platform. Video discoverability has proven to be a huge force for consumer creators. Now, that powerful capability has been tailored for enterprise creators.

While we built Vidcast to be a flexible tool that can be used by anyone, we’ve seen key use cases stand out over the past 10 months. Our new AI-enabled features will resonate with creators and viewers alike. In particular, we’ve seen triple-digit growth in sales, training, and customer success use cases. These creators see 8-10x higher engagement on posts that include Vidcast videos over ones that don’t have them. We also see high engagement in emails that use Vidcasts, with an average of a 67% conversion rate for opened outreach emails. Finally, in addition to higher engagement and better conversions, time saved is a key driver. For example, we chatted with one of our customers in the learning and development category, and this is what they shared:

“We recently leveraged Vidcast to transform a 90-minute monthly live learning session into an asynchronous, on-demand module comprised of bite-sized videos (< 10 min) ready for learners when they’re available to consume. Vidcast eliminated the pressure and need for approximately 150 team members to break momentum in the workflow.”

—Laura Hamill

A note on responsible AI

It’s important to note that we’re conscientious about the consequences of AI editing. With this kind of algorithmic power, it is critical to use this technology responsibly. In the wrong hands, it could be used to create deep fakes. To avoid this, we’ve taken a few important steps.

First, we’ve limited the feature to our power creators who must meet two conditions: a) they must have already created over one hour of Vidcasts that serve as training material for the algorithm, and b) they have to opt-in to allow us to use that content to train. After we develop the custom models for those creators, we only make them usable for editing in their logged-in accounts. These same models are not accessible via APIs and are not directly accessible for use outside the feature. That way we can limit these capabilities to responsible use behind a secure log-in within Vidcast.

Feedback, please!

It has been an extremely high-growth first year here at Vidcast. We’re really excited to ship these AI-enabled features that will help our users save time in a meaningful way. Learning and iterating are part of our operating culture, so we’d love to see how these capabilities help you—we’d love your feedback!

***

Interested in seeing a demo of the features mentioned above? Please email us at support@vidcast.io.